There is a saying that when something is punishable by a fine, it really means “legal, for a price”. Rogue UK employers seem to take this to heart. A shocking investigation by the Bureau of Investigative Journalism published this month shows the penalty system has barely been enforced: out of more than 4,800 firms fined since […]

Category: Research

Originally published for Jacobin. Ever since Robert Brenner published his essay “The Economics of Global Turbulence” in 1998, there has been a wide-ranging debate about his understanding of the period since the 1970s as a “long downturn.” Seth Ackerman and Aaron Benanav have recently been extending this debate in Jacobin. Some of the claims that Benanav puts forward in his reply to Ackerman, largely […]

The original post on Brave New Europe. The gig economy is often talked about as ‘the future of work’, but if we look at history we find that its wage model – paying per output, rather than per hour – actually goes back hundreds of years. In the 19th century, this was called ‘piece wages’, […]

Technological change is often be viewed as an exogenous force, a deus ex machina “outside the domain of economic theory” (Schumpeter 1911:11), or an endogenous force, subordinate to the institutional régulation of capitalism (Gentili et al. 2020; Montalban et al. 2019; Spencer 2017). Drawing on neo-Schumpeterian and régulation theory, this paper sublates these antithetical positions to form an alternative approach that re-examines the […]

Cloudwork is absorbing an increasing proportion of the world’s labour and has been significantly boosted by the COVID-19 pandemic (ILO, 2021). We use cloudwork to refer to remotely performed labour mediated by digital labour platforms – companies that connect workers with clients through a digital interface, exert control over and extract value through the labour process […]

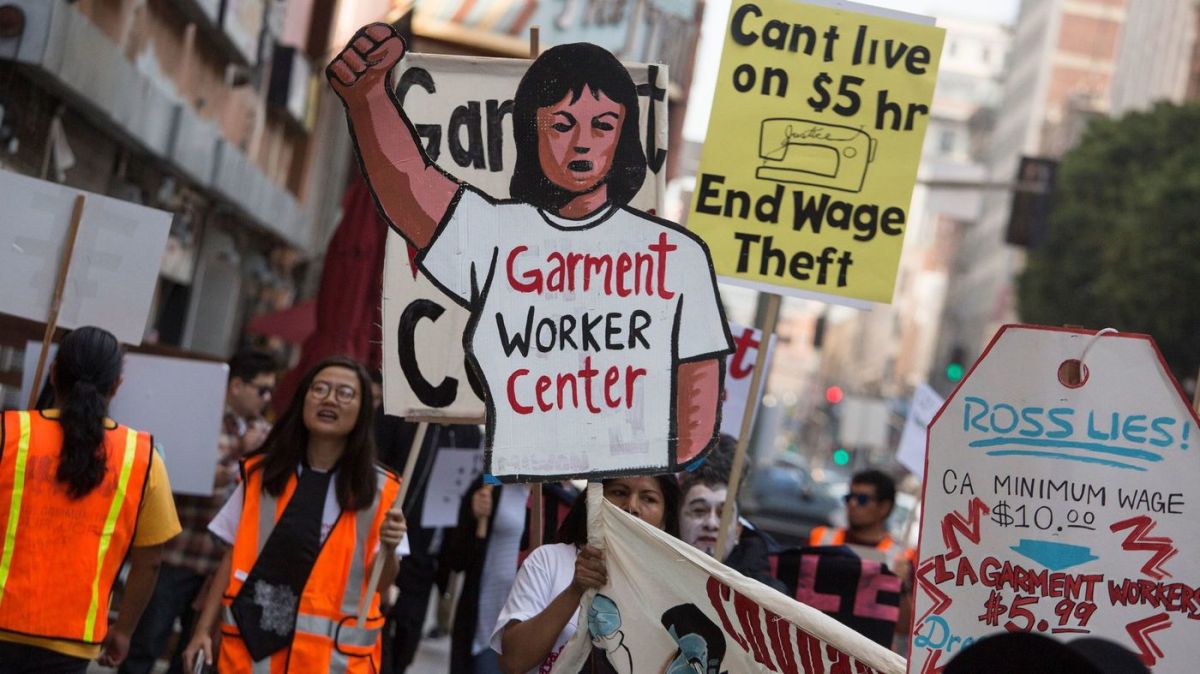

On the Trade Union Congress’ (TUC, 2019) 15th annual ‘Work Your Proper Hours Day’, TUC General Secretary Frances O’Grady asserted, ‘It’s not okay for bosses to steal their workers’ time’, pointing to the chronic theft of workers’ time and money in the United Kingdom (UK). Over five million UK workers laboured a total of two […]

The Wage Theft Epidemic

Originally published for Tribune. Every society with a concept of property also has a concept of theft. They are mutually constitutive, as Proudhon argued in 1840. To privatise public land is a form of theft; to alienate an object from its rightful owner is a form of theft; to plagiarise another person’s idea is a […]

How Value Weaponises the Machine

See original here. For some time now, a façade of techno-optimism has obscured the political reality that technology is not neutral. While Marx saw both oppressive and liberatory potential in technological systems, recent narratives have tended to neglect how technology has historically been used to deepen exploitation, rather than to overcome it. In his new book, Breaking […]

The coronavirus is an exogenous shock to the global economy, causing panic in the financial markets, a jobs apocalypse and an unprecedented crisis in health services. At the same time, the necessary safety measures are challenging the very nature of work and human sociality. Social distancing and lockdowns have been implemented around the world and in many […]

Below is a series of three blogs (part 1, part 2, part 3) I wrote for Autonomy last year on the history of intelligent machines. This serves as an introduction to anyone curious about artificial intelligence and how it might shape the future of digital automation in work and society more generally. Introduction The notion […]